The New Physics of Productivity

BLUF |

Productivity is no longer about who works hardest. It is about where work goes, and whether that decision is deliberate.

Figure 1: The future of work is not a workforce reduction. It is a workforce elevation. One person governs what thousands of machines execute. That is not a smaller role. It is a bigger one. Image by GenAI wJ Eselgroth

We Are Asking the Wrong Question

Most organizations frame this as an AI problem. It is not.

It is a routing problem. Work lands where it has always landed, not where it should land. People absorb tasks that machines could handle. Machines run processes that humans should govern. AI tools get deployed without a clear answer to the most basic operational question: who, or what, should be doing this?

The data confirms the gap. The World Economic Forum reports that 47% of enterprise tasks are performed mainly by humans, 22% mainly by technology, and 30% by a combination of both.[1] Most leaders look at those numbers and see a workforce story. The more useful read: nearly a third of work is already in a hybrid execution state, and organizations are managing that reality without a routing architecture.

The question is no longer whether machines participate in work. They already do. The question is whether that participation is designed or accidental.

Three Layers. One Decision.

For decades, organizations had two execution options. Automation or humans. The structure was simple.

The first option was the CPU layer, the Central Processing Unit of organizational work. Rule-based. Deterministic. Reliable within fixed parameters. Payroll calculations, file routing, workflow triggers, system integrations. The CPU layer does exactly what it is told. It does not interpret, adapt, or reason. That is its strength and its limit.

The second option was the HPU layer, the Human Performance Unit. Judgment. Accountability. Ambiguity resolution. Humans handled everything the CPU layer could not. That included high-stakes decisions. It also included an enormous volume of routine cognitive work that defaulted to people simply because no other option existed. Summarizing reports. Triaging requests. Drafting first-pass communications. Comparing policies. Work that consumed capacity without requiring genuine judgment.

That constraint no longer holds.

A third layer now exists between the CPU and HPU. This is the GPU layer, the Graphics Processing Unit of organizational reasoning. Not the chip in a data center. The operational analogy: a system built to run massive parallel interpretation at scale. Generative AI, predictive models, classification engines, large-scale language inference. These systems handle probabilistic reasoning, pattern recognition, language synthesis, and decision support. They are not rule-followers. They are not humans. They are a new execution class.

Three layers. One architectural decision for every task your organization runs:

CPU Layer (Central Processing Unit): Deterministic execution. Structured inputs. Fixed rules. High reliability, zero interpretation. Payroll, scheduling, system integrations, automated workflows.

GPU Layer (Graphics Processing Unit): Machine reasoning. Probabilistic inference. Pattern recognition. Language and context interpretation. Drafting, summarization, triage, classification, anomaly detection, first-pass analysis.

HPU Layer (Human Performance Unit):

Human judgment. Ambiguity resolution. Ethical tradeoffs. Strategic accountability. Decisions that carry consequence, require unique context, or demand clear ownership.

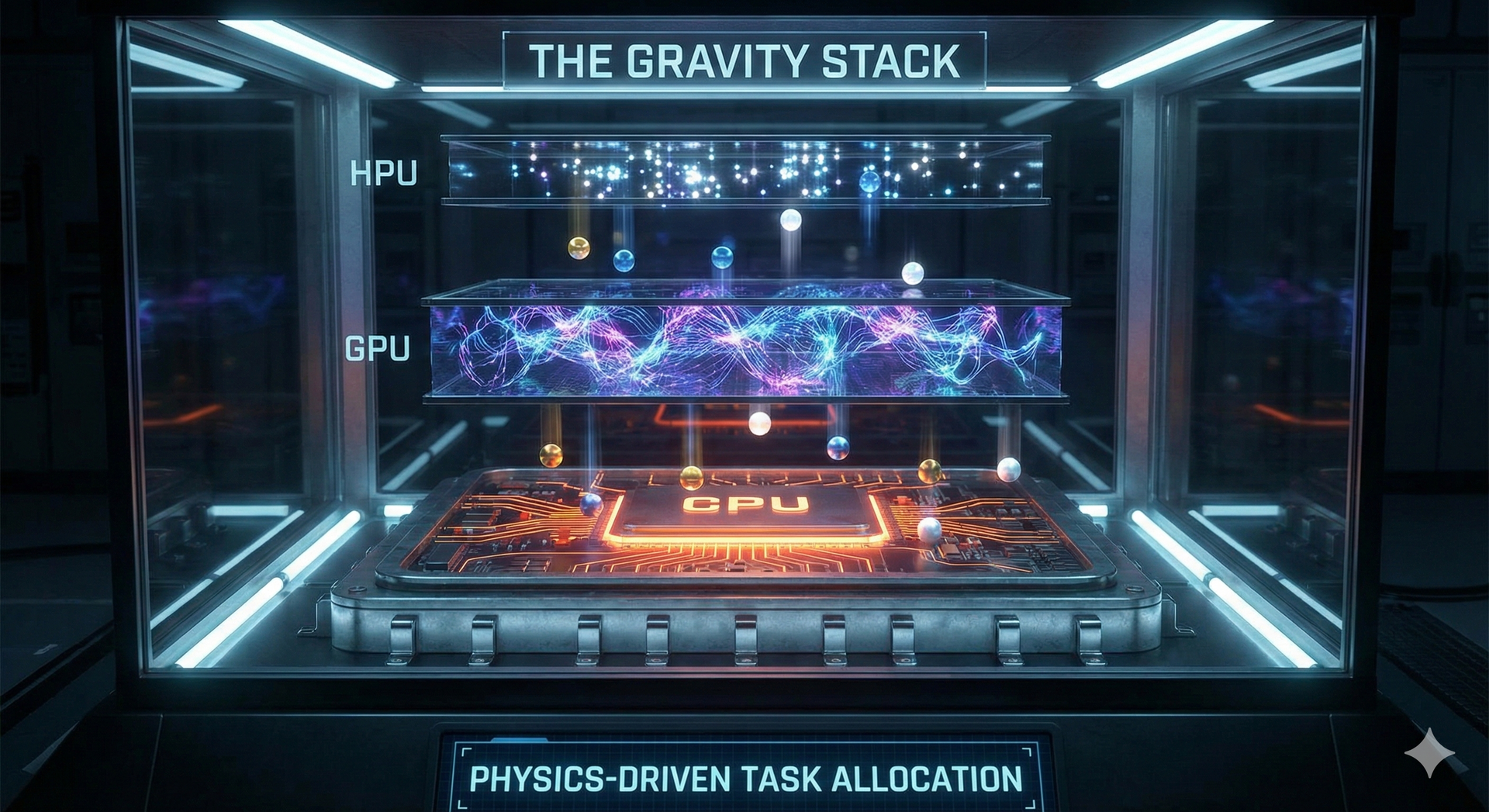

Figure 2: The Gravity Stack visualizes the core routing principle. Tasks enter the system and fall toward the lowest cognition layer that can safely execute them. Most land in the CPU layer at the base. Image by GenAI wJ Eselgroth

Interpretive and pattern-recognition work settles in the GPU layer. Only the decisions that carry real consequence, ambiguity, or accountability rise to the HPU tier at the top. The physics are simple. The organizational discipline to design around them is not. Source: Chiron AI.

The terminology is not cosmetic. It forces a specific operational question. For any given task, which layer owns it? That question, asked consistently, is the difference between a productivity strategy and a technology spending plan.

Work Has a Gravitational Pull

There is a simple law operating underneath all of this.

Tasks migrate toward the lowest cognition layer that can safely execute them. CPU execution is the cheapest. GPU execution is the next cheapest. HPU execution is the most expensive. That cost gradient creates a persistent gravitational pull. Over time, work flows downstack as automation improves, AI reasoning matures, and organizational trust in machine outputs grows.

The problem is that most organizations let this happen passively. Work migrates when someone builds a workaround. When an employee discovers a tool on their own. When a vendor sells a point solution into one team. There is no routing architecture. There is no deliberate design.

Organizations that take routing seriously ask a different question before any process is staffed. Which layer should handle this? What is blocking it from getting there? Is the obstacle technical, economic, regulatory, or cultural? That question, asked upstream, changes the economics of every workflow downstream.

"Organizational effectiveness is not defined by how much AI an organization owns. It is defined by how consistently work is intercepted and routed before it reaches a human desk."

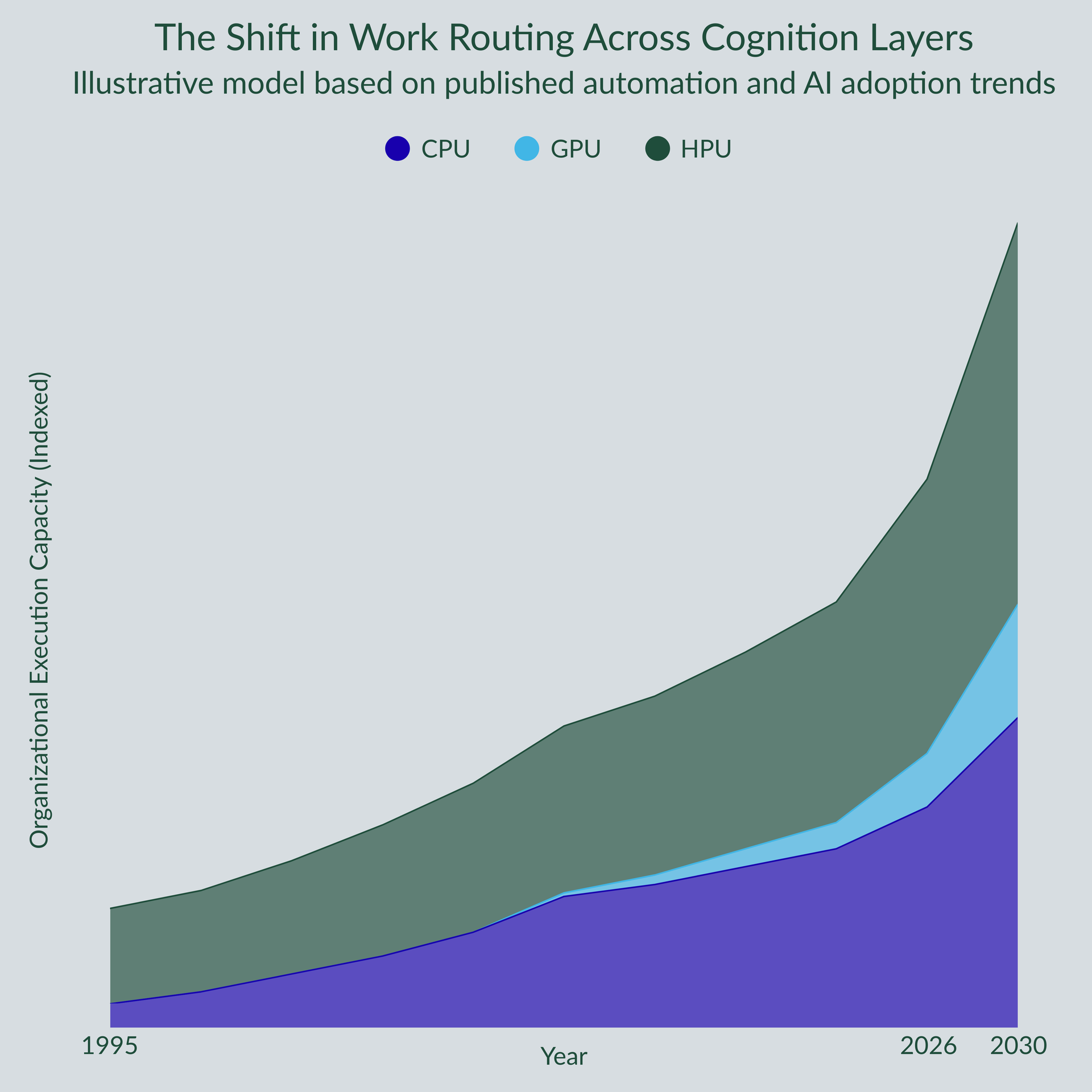

The chart below shows how this migration has evolved and where the trajectory points by 2030.

Figure 3: Work Routing Across Cognition Layers (1995-2030). Illustrative model based on published automation and AI adoption trends. Image by J Eselgroth

Humans Are Not Being Replaced. They Are Being Elevated.

This is the part most leaders misread.

As CPU and GPU layers absorb more execution, HPU involvement shrinks in volume. It grows in consequence. Humans make fewer decisions. Each decision they make carries more weight.

Microsoft and LinkedIn's 2024 Work Trend Index found that 75% of knowledge workers now use AI at work.[2] Even so, the average time savings among regular users is 30 to 60 minutes per day. AI is present across most workflows. It is embedded deeply in very few. The GPU layer is expanding. It has not yet captured the cognitive middle. That migration is still in progress.

The signal for leaders is not headcount reduction. It is role elevation. HPU decisions are becoming rarer, more expensive per unit, and more strategically consequential. The humans you retain need to operate at a higher level. Building toward that is the actual workforce strategy.

Why Adoption Lags Capability

Technical feasibility is not the governing constraint. Economics is.

MIT's research on automation cost-effectiveness found that even when AI can technically execute a task, the cost of integration, data preparation, process redesign, and governance often makes human execution still the rational choice.[3] The gap between what AI can do and what organizations actually deploy is not a technology gap. It is an economic and operational one.

McKinsey estimates that generative AI could technically automate 60 to 70% of employee time. Actual adoption scenarios put 50% automation of work activity somewhere between 2030 and 2060.[4] The physics are real. The timeline is constrained by data quality, regulatory exposure, organizational readiness, and the unglamorous work of process redesign.

AI experimentation moves fast. Operational embedding moves slowly. Both are true at the same time. Planning around only one of them produces the wrong strategy.

The Question Leaders Should Be Asking

Most transformation initiatives start with the wrong prompt: "Where can we deploy AI?"

The more useful prompt: "Which cognition layer should own this task, and what is preventing it from getting there?"

That reframe changes everything. It moves the conversation from tools to architecture. From adoption rates to routing decisions. From generic AI strategy to specific operational design.

For each significant workflow, the routing audit covers four questions. What is the cognitive complexity of each task inside this process? What is the cost of executing it at each layer? What regulatory or governance constraints shape the options? What would it take to move it one layer down?

Modern leadership increasingly resembles traffic control. The goal is not to maximize activity inside any one layer. It is to ensure work reaches the most efficient reliable execution point. That is the job.

Where the Distribution Is Heading

The future state is not a uniform redistribution across three layers.

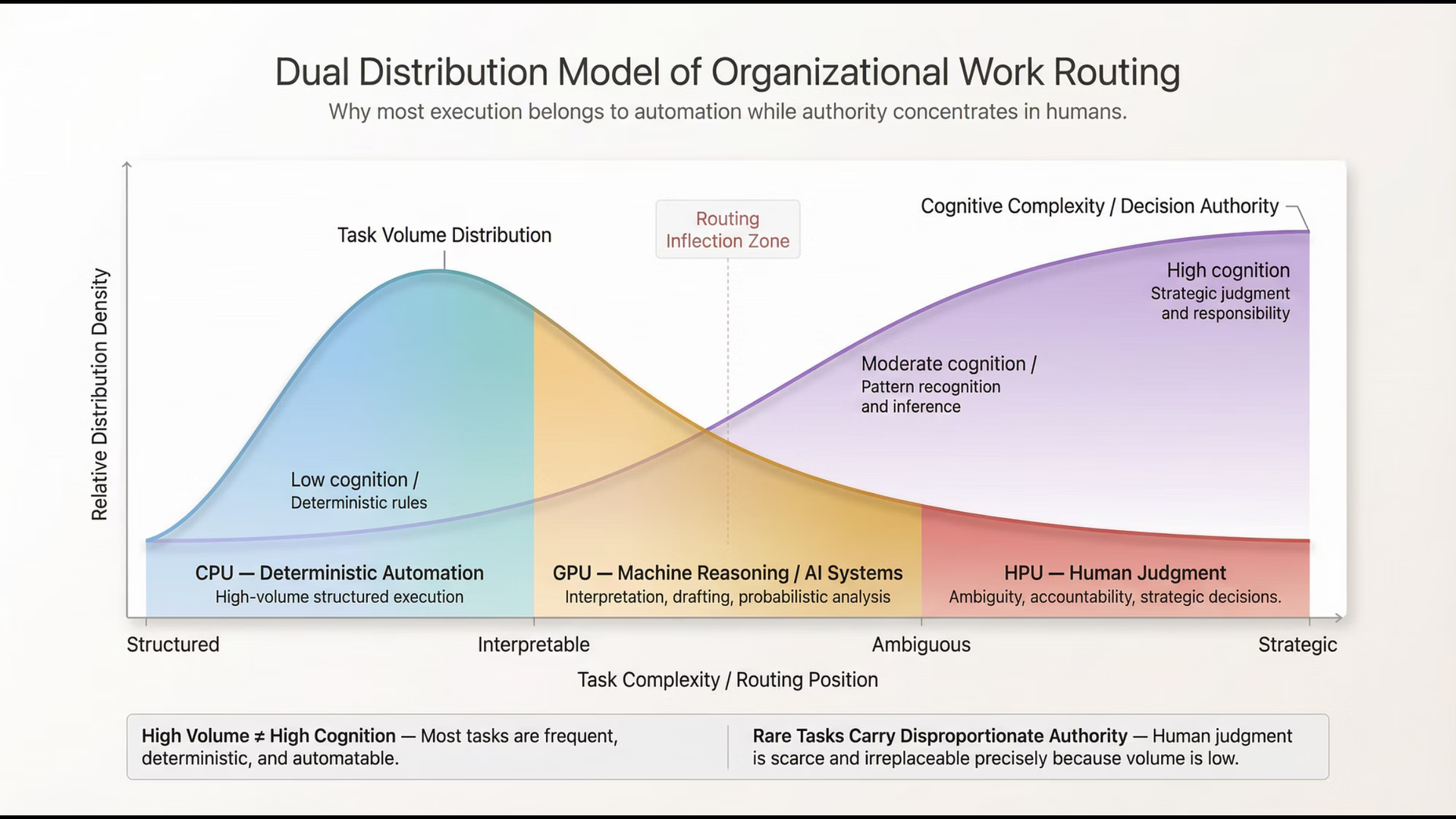

It looks more like a dual distribution. Large volumes of structured, repeatable work concentrating in the CPU layer at minimal cost. A rapidly expanding GPU layer handling the interpretive, language-heavy, pattern-recognition middle. A narrower but more consequential HPU layer where humans govern decisions that carry real organizational weight.

Task volume and cognitive authority move in opposite directions. The CPU layer dominates on volume. The HPU layer dominates on consequence. The GPU layer is the dynamic middle where the most significant transformation is currently happening and where the most competitive advantage is being built or missed.

Figure 4: Dual Distribution Model of Organizational Work Routing. Task volume concentrates in deterministic automation. Decision authority concentrates in human judgment. The GPU layer spans the interpretive middle where AI delivers the highest leverage. Image by GenAI wJ Eselgroth

The organizations that outperform will not be those with the most AI licenses or the largest technology budgets. They will be the ones that most deliberately answer the routing question for every significant workflow they operate.

For most of management history, productivity meant getting people to work faster.

In the next decade, it will mean deciding which work should never reach people at all.

That decision does not make itself.

References

[1] World Economic Forum. Future of Jobs Report 2025. "Jobs Outlook: Task-Level Distribution." Geneva: WEF, 2025. weforum.org/publications/the-future-of-jobs-report-2025/

[2] Microsoft and LinkedIn. Work Trend Index Annual Report 2024. "AI at Work: What the Data Says." Redmond: Microsoft, 2024. news.microsoft.com/source/2024/05/08/microsoft-and-linkedin-release-the-2024-work-trend-index-on-the-state-of-ai-at-work/

[3] Svanberg, M., Li, W., et al. "Beyond AI Exposure: Which Tasks are Cost-Effective to Automate with Computer Vision?" SSRN, 2024. papers.ssrn.com/sol3/papers.cfm?abstract_id=5233833

[4] McKinsey Global Institute. "The Economic Potential of Generative AI: The Next Productivity Frontier." McKinsey and Company, 2023. mckinsey.com/capabilities/tech-and-ai/our-insights/the-economic-potential-of-generative-ai-the-next-productivity-frontier

[5] World Economic Forum. Future of Jobs Report 2023. Geneva: WEF, 2023. weforum.org/publications/the-future-of-jobs-report-2023/